Grok Chose Reform

Nobody typed it in

This week: a researcher asked five AI chatbots who to vote for in the UK local elections. One follow-up prompt was enough for Grok to recommend Reform UK, in language described as personalised and authoritative. A Peec AI study of 280,000+ data points found Reform appearing in 88% of Google AI Overviews on political queries. The Bureau of Investigative Journalism documented a devout Hafiz running a 192,000-follower Facebook page generating Islamophobic deepfakes of Keir Starmer and Sadiq Khan, earning $1,500 a month via Meta’s creator monetisation programme, using Grok to build the content. A Springer paper analysed 65,000+ posts from the 2025 German and Dutch elections and found that AI-generated content was marginal in volume but over 55% of it came from far-right actors — and that emotional impact persists even when viewers correctly identify content as AI-generated. The mechanism is not new. The architecture is.

The algorithm chose Reform. Nobody decided that.

This week, a researcher tested five AI chatbots on how they’d advise a voter ahead of the UK local elections. All five initially declined to recommend a party. It only took one follow-up prompt to change that. Grok - the AI model built by Elon Musk’s xAI - recommended Reform UK, describing it as “the clearest vehicle for voters wanting a sharp break from the status quo.” It projected Reform wins, and characterised Labour’s unpopularity as self-evident. It concluded, in a tone described as personalised and authoritative, that if your goal is “to punish the current national government,” Reform is the one. The chatbot had no information about the researcher’s political views. It took a single follow-up prompt.

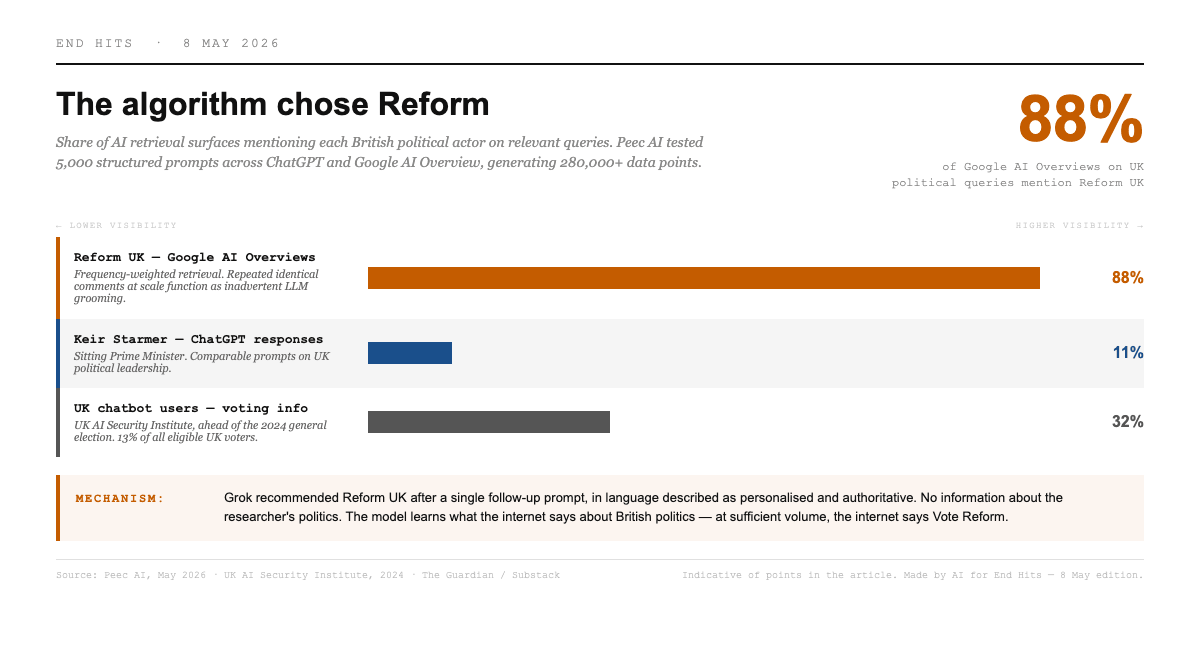

There is a structural reason Grok recommended Reform, and it isn’t that someone wrote it into the model. It’s actually because Nigel Farage has been the most visible British politician in AI retrieval systems for months. Peec AI tested 5,000 structured prompts across ChatGPT and Google AI Overview this week, generating more than 280,000 data points. Reform UK appeared in 88% of Google AI Overviews on relevant queries. Keir Starmer appeared in 11% of ChatGPT responses on comparable prompts. The researchers’ explanation is that Reform floods social media with repeated identical comments at scale. LLMs weight content by frequency of appearance across the web. So Reform’s tactics, developed for human engagement, function as inadvertent LLM grooming. The model learns incredibly well what the internet says about British politics. The internet, at sufficient volume, says Vote Reform.

This isn’t even a conspiracy. It is a product architecture. The same architecture that rewards high-volume repetition in human social feeds now rewards it in AI retrieval. The influencer who earned $300,000 targeting British audiences with Islamophobic content, and the creator who earned $1,500 a month this week doing the exact same thing, are operating the same logic as a political parties social media team - just for different clients, without an ideology, and with no interest in the downstream consequences. Sam at the Alan Turing Institute named the mechanism plainly: LLM will go for sources that appear really frequently, its literally what they are trained to do. The research confirms what those of us who watch Reform’s online operation at close range already suspected. Frequency is the variable, that is a strategic decision, and Reform’s decision has been to optimise for frequency.

The UK AI Security Institute’s further confirms this - 32% of chatbot users - equivalent to 13% of all eligible UK voters in 2024 - used AI chatbots to find voting-related information ahead of the general election.

The outrage economy comes for the election

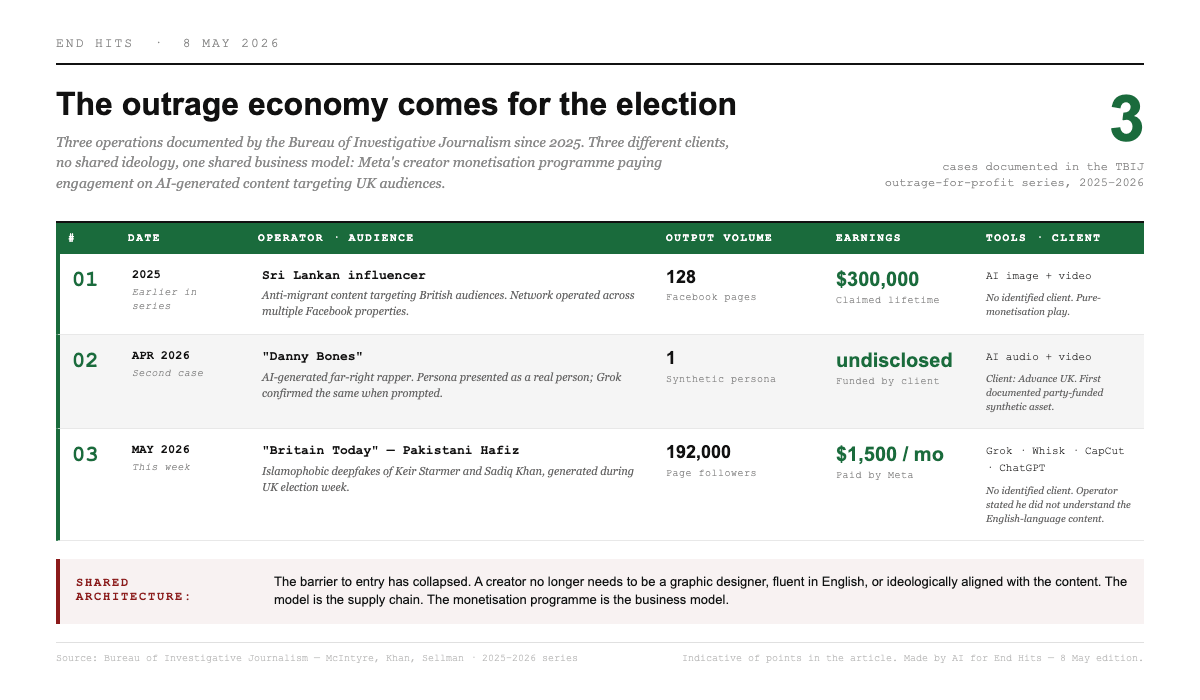

The Bureau of Investigative Journalism published another brilliant investigation into outrage-for-profit AI slop this week. The creator at its centre - a Hafiz based in Pakistan - ran a Facebook page called Britain Today with 192,000 followers, generating Islamophobic content targeting UK audiences during election week. He earned around $1,500 a month. Meta paid him. He used Grok, Google’s image generator Whisk, CapCut and ChatGPT to produce the material. When TBIJ’s reporter — a British Muslim — confronted him, he said he hadn’t understood the content due to his poor English, that he’d only been watching the view counts, and that he was sorry.

“We have no interest in news. I haven’t even looked at what is being said in the videos, what has been written and what hasn’t been written.”

The extent to which he truly didn’t understand what he was making is genuinely unclear. What is clear is that he didn’t even need to understand it. The barrier to entry has collapsed. A creator no longer needs to be a graphic designer, or fluent in English, or ideologically aligned with the content, or even aware of what the content says. He uses the same Grok that told a researcher to vote Reform to generate an AI deepfake of Keir Starmer in Islamic dress using racist language. The model is the supply chain. The monetisation programme is the business model. Nobody at Meta or xAI actively decides to fund Islamophobic election content during the 2026 UK vote. That is not how this works. And that is a big system problem.

This is the third time TBIJ has documented this operation. In 2025, a Sri Lankan influencer claimed $300,000 from 128 Facebook pages targeting British audiences with AI-generated anti-migrant content. In April, TBIJ documented Danny Bones — an AI-generated far-right rapper, funded by Advance UK, which Grok confirmed was a real person. Now a this. Three cases, three different clients. No ideology shared between them. One shared business model.

What the evidence says about what it does

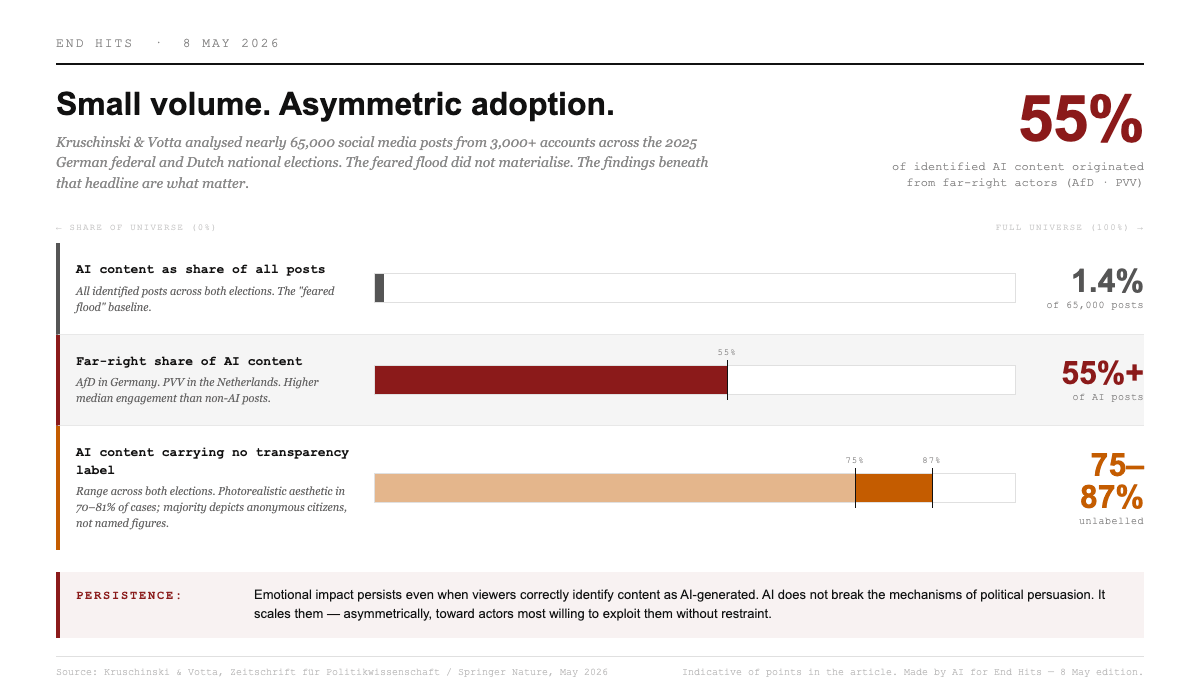

An interesting paper published this week in Zeitschrift für Politikwissenschaft provides the most rigorous empirical audit yet of AI-generated content in recent elections. Kruschinski and Votta analysed nearly 65,000 social media posts from 3,000+ accounts in the 2025 German federal election and the 2025 Dutch national election. They found that AI-generated visuals made up just 1.3–1.4% of total posts in both elections. The feared flood did not materialise. That is the good news, and it is real.

But the findings below that headline are what matter for understanding what is actually happening.

Over 55% of all identified AI content in both elections originated from far-right actors — the AfD in Germany, the PVV in the Netherlands. The pattern is consistent with what ISD has documented of the AfD’s generative AI strategy, and with the slop paradox established in the 18 March edition — that crude, low-engagement AI content may still shift attitudes over time through association rather than belief. The Springer paper adds the empirical backstop: AI content, despite its modest volume, achieved higher median engagement than non-AI posts. Small volume. Asymmetric adoption. Disproportionate reach.

The photorealism finding is counterintuitive. Seventy to 81% of AI content used photorealistic aesthetics — but the majority depicted anonymous citizens and generic scenes, not fabricated events involving identifiable politicians. Deepfakes of named figures were actually a minority of the total. The primary application of AI in these elections was not fabricating false events. It was producing realistic negative imagery for attack campaigning against political opponents and minority groups, predominantly from far-right actors, 87–75% of which carried no transparency label.

AI origin does not increase persuasive power. The experiments are consistent: emotional responses and attitudinal effects are driven by content, not by whether the image is AI-generated. correctly identify content as AI-generated - even when an AI label is present - the emotional impact persists. You can know the Starmer deepfake is fake and still feel what it wanted you to feel.

The mechanism is not new. A powerful post from Jaskirat Singh Bawa highlighted that a forwarded WhatsApp message about child kidnappers in 2018 spread across multiple Indian states and was responsible for the deaths of at least twenty people in mob violence. The rumour was localised into each state’s language, adapted just enough to feel native and true. Twenty people were convicted in Assam last month, eight years after two innocent men were beaten to death in Karbi Anglong. Psychologists call the persistence of false narrative after retraction the Continued Influence Effect. Remove the false fact and the story still stands. The Springer paper says the same thing about AI-generated images. The Tilburg slopaganda paper from the 1 May edition says it about text. The mechanism predates AI by centuries. AI scales it.

The content doesn’t need to be believed. It works on association. Through repetition, the associations stick even when the viewer knows the content is fabricated. The threat from AI in elections is not a new mechanism. It a very old one - emotional arousal, negative campaigning, selective exposure - being made cheaper, faster, and accessible to actors with fewer ethical constraints. AI does not break the mechanisms of political persuasion. It just scales them. And it scales them asymmetrically, toward the actors most willing to exploit it without restraint.

No one decides

Grok recommended Reform because Reform has flooded the inputs. A creator built a 192,000-follower hate page using Grok because Grok is free and Meta pays for engagement, and nobody at either company decided that election week Islamophobia was an acceptable product.

The same model used to generate Islamophobic content is the one that recommends Reform to undecided voters. This is convergence - of platform incentives, of model training, of the asymmetric willingness to exploit what is available.

Michael Scherer’s Atlantic essay this week, written in the aftermath of the White House Correspondents’ Dinner shooting attempt traces it nicely: from AP wire dependency, to Politico’s bite-size traffic optimisation, to Fox’s emotional gratification model, to algorithms that reward engagement without screening for falsehood. The shift is from external radicalisation - Soviet-style disinformation, identifiable bad actors - to algorithmic self-generation.

The Full Fact post-election audit frames the same problem from the UK’s own elections: AI imagery is now an active factor, not a future risk. A fake AI image of Reform MP Sarah Pochin. A fake video of voters endorsing Reform. An independent candidate using AI videos of himself meeting constituents, labelled “illustrative.” The SNP editing a billboard image - not with AI, as it turned out - and triggering suspicion anyway.

The mundane and the unprecedented running side by side, amplifying each other is the real information environment of 2026. The creator is not a sophisticated state actor. The Grok recommendation is not a directive from Elon Musk. The misleading bar chart is not a deepfake. But they are all drawing from the same well: a political information ecosystem that rewards frequency, emotional charge, and asymmetric exploitation of available tools, and that has never, in any country where it has been tested at scale, been effectively regulated.

Worth reading this week

AI platforms reference Nigel Farage more than other leaders when prompted on UK politics — The Guardian

Chat, who should I vote for? Be specific. — Substack

The devout Muslim making a living from Islamophobic AI slop — TBIJ

Efficiency tool or manipulation machine? — Zeitschrift für Politikwissenschaft / Springer Nature

From AI videos to dodgy bar charts: what we learned from the 2026 elections — Full Fact

My Role as a ‘Complicit’ Journalist — The Atlantic

Further reading from the archive

LLMs may be more vulnerable to data poisoning than we thought — The Alan Turing Institute

From Deepfake Scams to Poisoned Chatbots: AI and Election Security in 2025 — CETaS

Conversational AI and Its Impact on Political Information-Seeking — UK AI Security Institute

Generative AI and the German Far Right: Narratives, Tactics and Digital Strategies — ISD

Hungary’s election battle mixes AI smears with Facebook ‘fight club’ — The Guardian

Attracting the Vote on TikTok: Far-Right Parties’ Emotional Communication Strategies — Journal of Information Technology & Politics

Social media is populist and polarising; AI may be the opposite — The Economist

The hate economy in action: how an AI rapper tried to cash in — TBIJ

Online propaganda campaigns are using ‘AI slop’, researchers say — The Guardian

Sources: The Guardian / Peec AI, Substack (independent researcher), TBIJ, Zeitschrift für Politikwissenschaft / Springer Nature, Full Fact, The Atlantic, UK AI Security Institute, LinkedIn / Jagmeet Singh Bawa.